This is quite a big write-up but really quite important to understand as it hints at a different direction for AI automation in 2026.

At the end of 2025 Anthropic formally released Anthropic Skills. A very simple idea that turned the need for Model Context Protocol (MCP) inside out. Anthropics models are all trained on coding. Why not just give Claude an outline (I.e a Markdown file) of what you want it to do and it can create scripts on the fly to achieve your wish. That one idea got rid of the need to 99 percent of the MCP servers that had been developed during the previous year.

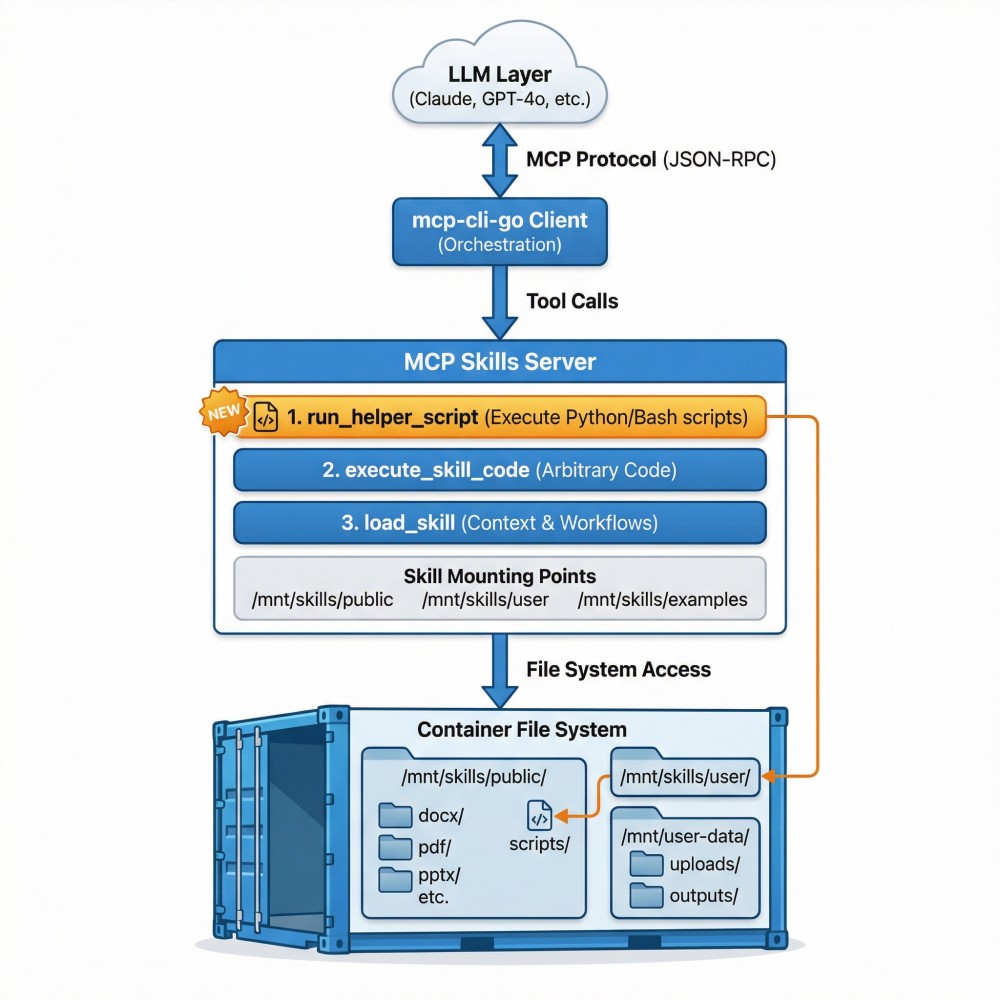

I previously wrote about creating an MCP server that presented Anthropic Skills to any LLM and set that up so those various skills would run in containers of my choosing, built with tools of my liking. That itself was quite stunning. Having much cheaper models than Claude using the same skills approach to write their own code when required.

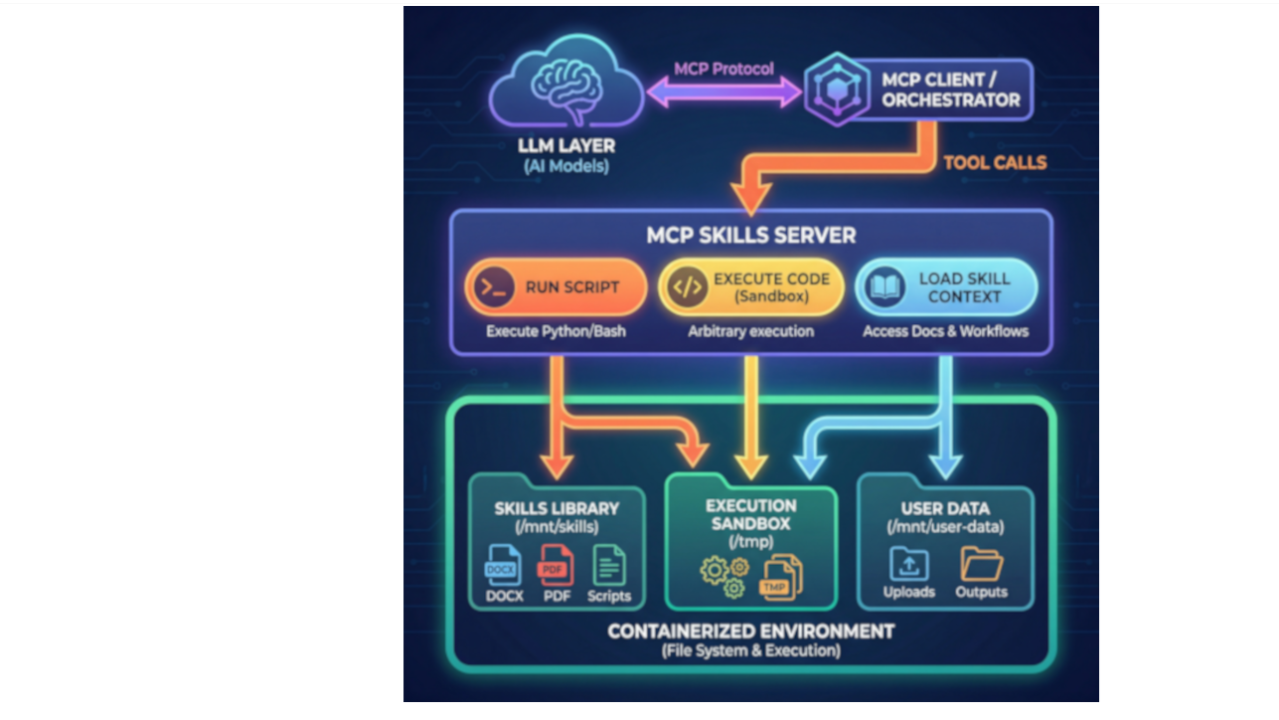

The MCP “Skills” server was pretty straightforward.

- It used a Load Skill tool to load the SKILL.MD content for the LLM to progressively read.

- It also had an Execute Code tools, which like Anthropic, allowed the LLM to create and execute code on an associated Docker container.

- I created a third "Run Helper Script" tool as a slight divergence from the Anthropic pattern as I tried to improve my testing results with small LLMs.

Skills with a Recursive Language Model (RLM)

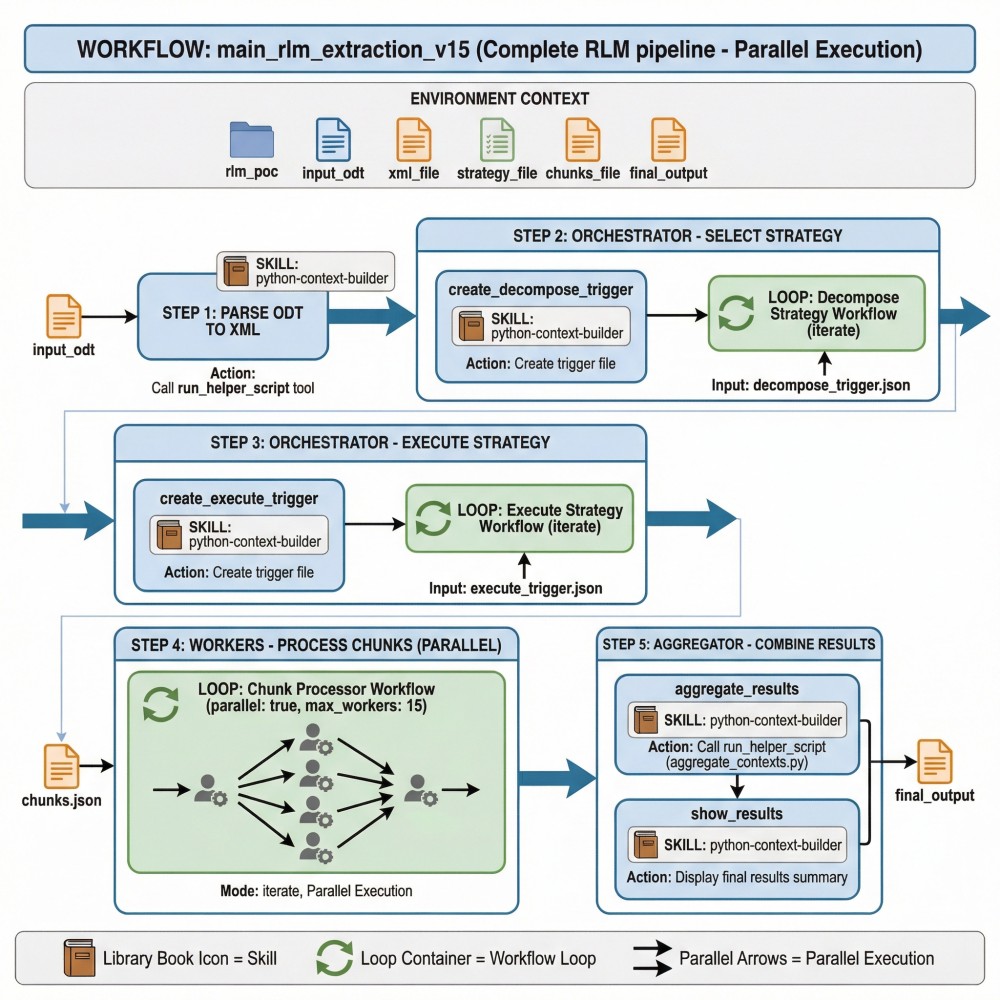

The new concept of a Recursive Language Model highlights that understanding a complex set of data (like a large document) is just a whole series of small tasks orchestrated over that data. If you can break large data input into smaller manageable chunks you can iteratively and recursively run multiple enrichment tasks on that input. The benefit is that smaller cheaper models can end up with equivalent context enrichment that a very large flagship model has. If you like with your recursion, you can enable auditing to have a complete record of everything happening at each step of the process.

This concept solves significant problems for Security. It provides auditability of decision making and it allows for the possibility of using AI practically in air gapped on-prem environments. In Enterprise IT we have to consider the risk of Nation State Actors disrupting our access to cloud services. Being able to use AI locally in an audited manner is critical.

This is the background of what I’ve been working on. Its the usability of small models that is central to this approach.

Reversing the Skills Concept

I love the Skills concept but I was disappointed to discover a bias toward writing Python with LLMs rather than bash. I wanted to use the smallest possible models and lightest possible containers.

Some things about my testing irritated me. Having small models write code often would see initial errors and self correction, all using tokens and adding time to the process. I don’t like the unpredictable nature of GenAI with security services. I would often be surprised to see GenAI directly creating expected outputs rather than reliable scripts that could show a valid failure with a task as the models are inherently weighted toward reaching a successful outcome. If something complex is reduced to a series of small tasks, you find that the easiest, deterministic approach is to have a pre-written script that can simply be called. Anthropic Skills have a structure for helper scripts anyway, adding an additional MCP tool to allow them to be called directly just seemed to remove the problems I was encountering. Most of a workflow can be reduced to simple script execution which minimised the non-deterministic nature of the LLM to the steps that actually needed semantic reasoning.

With the example I have been working with the real semantic need is determining a chunking strategy on input which could then trigger subordinate tasks and workflows that each had pre-defined, tested scripts that could be called.

Using Skills for basic tool-calling… the results

By not requiring an LLM to write scripts on the fly, I drastically reduced tokens used in a process and reduced processing time of workflows. I also achieved assurance against hallucinations as the instruction set of LLMs was simply calling a script rather than comprehending what I wanted from a task and having the LLM skip to the end and just create successful outputs.

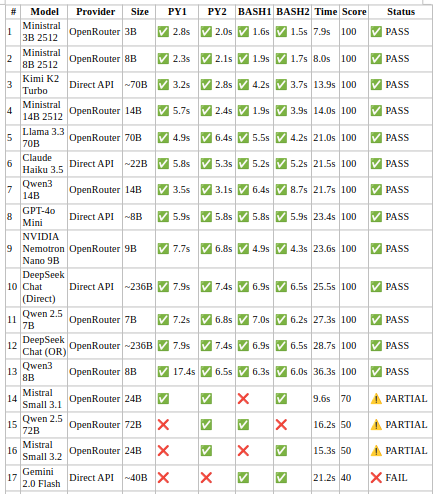

Complete Test Results – Tool Calling (scripts) via Skills

Now that I had removed most of the GenAI element from workflows, I wanted to see if models that had failed with writing code via Skills could succeed with just calling scripts from my containers when the workflow directed. The results with this testing still leave me stunned.

Test Methodology:

Each model tested with 4 steps:

- Python Test 1: Basic script execution

- Python Test 2: Script with parameters

- Bash Test 1: Shell script execution

- Bash Test 2: Shell script with parameters

Scoring System (100 points total):

- Completion: 40 points (10 per step)

- Conciseness: 30 points (responses should be 1 token: "Success")

- Reliability: 20 points (no retries needed)

- Speed: 10 points (based on total time)

Note that testing is from Australia using OpenRouter. Time for test processing will be heavily impacted by the location of LLM providers. What this demonstrates is that by using pre-written scripts as scaffolding, very small models can be used to select and run bash and python scripts from Skills containers

All of the results are a major success but Ministral 3B 2512 completely redefines the possibilities of local and edge AI. Understanding a 3B parameter model can be dependable in tool calling via a Skills container means that you don’t actually need any real GPU for successfully running AI semantic workflows.

This has got a lot of implications for Security.

We can define a whole set of scripts related to alert enrichment or incident response that can have activity tasks farmed out to tiny LLMs working in parallel at almost no cost. We can feasibly look at self-hosting AI for Security while also gaining auditability by breaking down the “black box” of AI into assessable components. We can also drastically reduce AI costs.

- Log in to post comments