OPINION: The Sentinel Data Lake is being heavily promoted as a game-changing development in SIEM. After two years of working with Microsoft's technology on a Big Data SIEM, I have a different perspective on this development.

This is a long read but a different assessment to every other perspective I have come across.

Security Big Data - Competing Perspectives

My best guess is that the Sentinel Data Lake was devised with different competing Microsoft perspectives in mind:

• M-21-31 - In 2021, the US Federal Government mandated that federal institutions adopt Big Data security monitoring capability with log monitoring on all system telemetry and 2½ years retention of unfiltered data. With increasingly sophisticated attacks, the security need for Big Data analytics is critical.

• Microsoft Sentinel - Security products have become a major pillar of Microsoft's revenue, with Sentinel carving out a dominating element of the SIEM market. Sentinel's $11 AUD per GB is too expensive to become a Big Data SIEM, but Microsoft is incentivised to augment Big Data capability with the product without reducing Sentinel income. Azure Data Explorer (Kusto) is already Microsoft’s Big Data Security product used within the organisation but it’s a different accounting stream to the Security division.

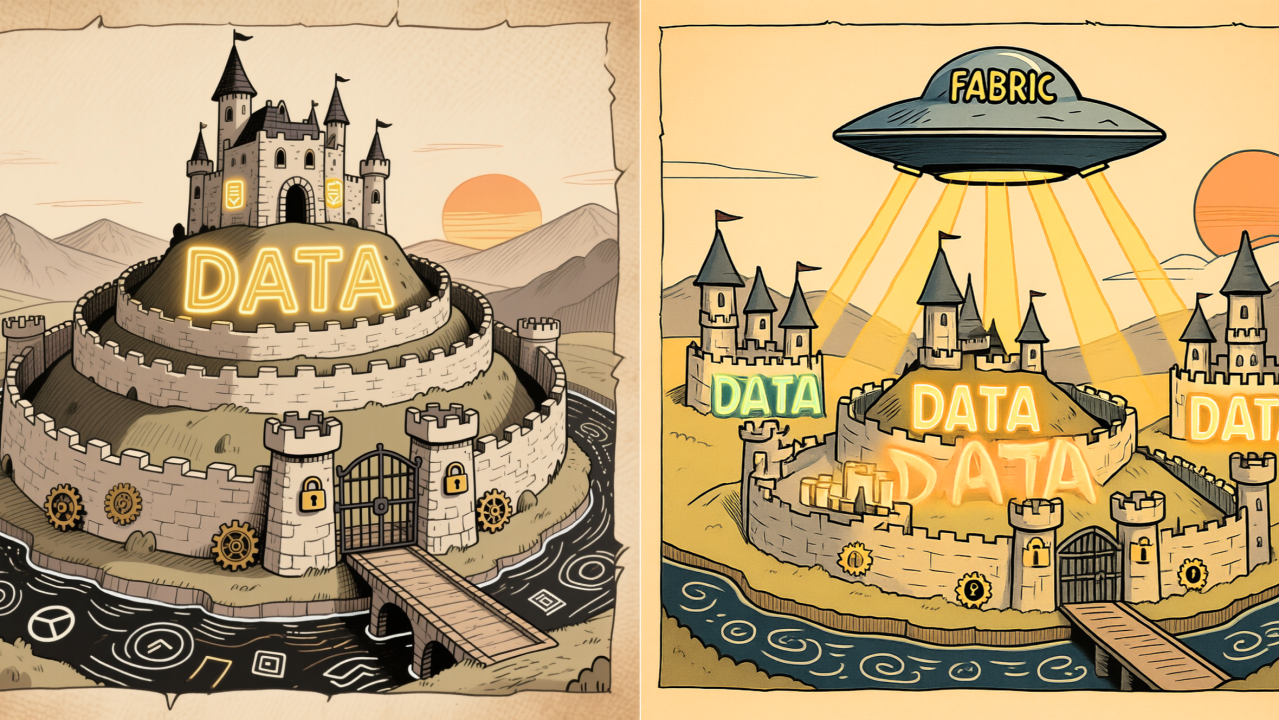

• Artificial Intelligence – As a branch of Machine Learning, AI's potential as a Microsoft revenue stream is based on centralising as much data as possible in a unified cloud environment. Microsoft's history has introduced technical problems with different ecosystems (PowerApps/Dynamics, Graph/O365, and Azure) not integrating well. Microsoft has been quietly breaking down the silos with Fabric and the “Dataverse”, with Microsoft Fabric as the new Data Engineer's canvas that can integrate data across the spectrum of the Microsoft Universe.

The Big Data SIEM Jigsaw Puzzle

There are possibly five pieces to assembling a Big Data SIEM product with Microsoft technologies:

- Analysis of captured data

- Storage for Big Data

- Data ingest patterns

- Machine Learning and AI

- Microsoft Sentinel's role with alerting

My guess as to how Microsoft has put the Big Data SIEM jigsaw together is like this:

Analysis of captured data - For SOC teams, familiarity with KQL and seamless integration with Sentinel and Fabric make Kusto an undisputed piece of the puzzle. Kusto is a phenomenal piece of engineering and probably the best Big Data Analytics platform on the market. Log Analytics is built upon Kusto and it has variations released as Azure Data Explorer for dedicated PaaS and EventHouse within the Fabric product.

A Big Data SIEM has to use Kusto, but its flexibility for analytics means a design choice of either ingesting data into a Kusto cluster or streaming data to a Storage Account and configuring the "external tables" feature to allow Kusto to query external data as required.

Storage for Big Data - Based on Kusto's ability to analyse data within or external to the product equally well, that design decision must be based on other criteria. Storage Account data may be incorporated as one Data Lake of many into Fabric today, which satisfies the M-21-31 obligation for federal organisations to prepare for ML and aligning Sentinel into the flat data landscape AI developments need.

Data ingest patterns - The consequence of using Kusto with Storage Account streamed data is that someone has to define the schema in Kusto as an external table. That means Microsoft must control the streaming of data to that storage with known schemas (which is effectively what we have today with Log Analytics Schemas and Azure Monitor Data Collection Rules). For this Big Data SIEM pattern to work, all data must be streamed using official data connectors that will output Parquet files while configuring a shared Kusto cluster to read the streamed data types.

Storage implementation - Hierarchical Namespace Storage (Data Lake already supported for ML integration with Fabric. This satisfies one of the m-21-31 regulatory requirements.

Integration with Sentinel - Events in the Data Lake solution is completely isolated from Sentinel, but a deliberately restricted form of data pipeline mechanism can allow data from the Data Lake to be ingested into Sentinel on a daily schedule. This means that Sentinel customers can have a capability for storing much larger amounts of log data while still ensuring that any logs that need real-time alerting still need to go through Sentinel with its $11 a GB charging.

On the surface, the Sentinel Data Lake addresses all the competing needs of Microsoft's customers and business factions. My guess is that the solution being presented all stems from the first design decision of streaming to a storage account, and I feel the real Big Data game-changer for Security is being missed.

What's Right with This Solution?

Long Term Log Retention - Having a turn-key solution for storing more than 2 years of security logs is overdue. For the vast majority of Microsoft's customers, the overhead of running Event Hubs and Azure Data Explorer has been an unnecessary cost.

Achieving this capability within Sentinel is undoubtedly a win.

For most of Microsoft’s customers, the prospect of trying to run a real Big Data SOC internally while getting value for spend is wildly unrealistic. For 99% of the industry, deploying Defender throughout the enterprise, turning on MFA and using Sentinel as s simple SIEM may make the most business sense.

What's Wrong with This Solution?

Regulated organisations of national significance will discover shortfalls between the needs of a Big Data SOC and what the Sentinel Data Lake delivers. Major Enterprises are nowhere close to being “secure” today and we need a step-change with data volume capture and analysis to start getting ahead of our current failings. This is what the M-21-31 tried to mandate as a directive.

1. Real-time Alerting - The point of the M-21-31 directive is that the modern threat landscape requires security monitoring of everything, including unexpected resource utilisation of workloads. Having a Storage Account of archived logs that aren't part of a real-time alerting capability misses the critical needs of Security for a Big Data SIEM.

2. Real-time Performance Metrics - M-21-31 requires federal agencies to also stream system metrics and performance counters for SIEM storage. This can be done well using a dedicated and segmented Azure Data Explorer cluster integrated with Grafana or Prometheus, which will allow Security and Technology teams to both benefit from the necessity of security data collection. This requirement is not captured with the Sentinel Data Lake solution.

3. Inconsistent Schema Use - A lack of standardisation of schemas between Microsoft project teams has been painful and the Sentinel Data Lake makes it worse. Online schema documentation doesn't reliably match the schemas of Log Analytics. Tables in Defender have different data types to columns in the same tables of Log Analytics, and the meaning of the TimeGenerated field is inconsistent between tables as well. The hope with ASIM was that Microsoft was standardising schemas, but instead the new default tables in Sentinel Data Lake follow a completely new table case formatting standard. My pet irritation was seeing the standard LogAnalytics field of TimeReceived replaced ReceivedTime. There still seems to be an unresolved lack of standardisation within Microsoft where every team comes up with their own schemas. In a Big Data world, schema standardisation is important.

More disappointing is that the data transfer mechanism of taking standardised logs from the Sentinel Data Lake forces a change of the table names when data is brought into Sentinel. The double handling of queries to accommodate restored data is unhelpful o say the least.

4. Dependency on Data Connectors - Every large organisation has a myriad of custom systems producing bespoke logging that requires SIEM ingestion. After 5 years, Sentinel's data connector library is still incomplete for Enterprise needs. Perhaps the deployment of bespoke Function Apps and Logic Apps always going to be a necessity.

The use of Event Hubs and Kafka for custom data ingestion is much easier for internal engineers to work with than wrangling with bespoke Data Collection Rules and Log Analytics Custom Tables. Knowing how Data Connectors are created today, my suspicion is that DCRs will end up being the official ingestion method for the Sentinel Data Lake too.

5. One Lake - As Microsoft moves toward a flat data landscape with Fabric, it looks clear that Security's SIEM data will be just one element of that unified Data Lake. This is a problem for those of us working in regulated environments where Security Logs are required to be stored in separate networks and externalised from access by Privileged Identity compromise.

Fabric is still in its early iterations and its security controls lack the layers of security controls we have built with on-prem and PaaS systems. For regulated industries, Security teams should be wary of this innovation. As CEOs and Boards are universally demanding AI yesterday, this may be a security battle already lost.

Microsoft's competitive advantage over Amazon and Google is that almost all firms seem to be using O365 for business data. Bringing the Power Platform Dataverse together with O365, Azure and Defender/Sentinel data in one data engineering tool leveraged by Copilot is a undoubtedly a business strategy.

The Sports Car in the Garage

Over the past 5 years, the official solution for long-term log retention with Sentinel was to deploy a Premium SKU Event Hub Namespace with a standard SKU Azure Data Explorer (ADX) cluster. The Sentinel Architecture advice was simply to use it as a bucket catching logs that passed through Sentinel.

Using the most powerful analytics platform on the planet as a storage container for archived Sentinel logs is like having a high-end sports car in the garage and only using it to drive to the letterbox. I’ve never seen an official Microsoft architecture that recommended sending Security logs directly into the ADX analytics platform but it’s an obvious yet seldom used use of the technology.

Abandoning the traditional Sentinel/ADX architecture pattern to stream logs to a Storage Account (that can be parsed by a shared ADX cluster through the Sentinel Portal) gets rid of that sports car without hope for eery obtaining the affordable, Big Data real-time monitoring modern security demands.

ADX was designed for real-time analysis of petabyte ingest and has been stress tested over years of Microsoft internal use. The tragedy of the Sentinel Data Lake (a.k.a. a Storage Account) is that the opportunity to bring Security Operations into complete signals awareness has been squandered.

Sentinel as a Case Management system is excellent, but it's too basic for the demands of modern Security Operations. Splunk seems to be going through a resurgence in large part because ADX hasn't been promoted as an Enterprise SIEM capability and the missing integration to forward curated data from ADX to Sentinel requires bespoke development.

Why Azure Data Explorer Still Remains Microsoft's Best Answer for Big Data and SOC

1. Real-time Big Data Alerting - Although custom Function App development is required to forward event data to Sentinel, ADX allows for enormous quantities of real-time data to be parsed and filtered within seconds. This allows Security Operations to manage alerting signals on all systems without filtering or delay. In Australia, it costs $11 per GB to parse logs for real-time alerting through Sentinel. Most Enterprise organisations have been forced to abandon alerting on 99% of log data to stay within budget. ADX provides a real-time filtering capability that provides the SOC all the data they need without Directors being fired over bill shock!

2. Layered Security Controls and Redundancy - The Australian Signals Directorate provides clear guidance for Australian organisations of significance that: "The storage of logs should be in a separate or segmented network with additional security controls to reduce the risk of logs being tampered with in the event of network or system compromise. Event logs should also be backed up and data redundancy practices should be implemented."

Azure Data Explorer already supports the enforcement of separate network security controls and, through utilising Event Hubs for data ingestion, redundant duplication of logs can be streamed to external ADX clusters in isolated Security Tenants.

3. Performance Metrics Monitoring and Alerting - ADX already has integrations for collecting, storing and analysing performance metrics with Grafana and Prometheus. Performance Counter monitoring as a security responsibility is mandated by M-21-31 for US federal entities. This may require investments in Data Pipeline products like Cribl and VirtualMetric due to limitations with the Azure Monitor Agent, but the mandate for Performance Data to be a Security monitoring capability is already there.

4. Storage Costs - Under the hood, Azure Data Explorer uses Storage Accounts – in reality, all Microsoft Services do. Columnar database file formats (i.e. Parquet) do provide excellent compression. ADX, Fabric and the Sentinel Data Lake all use Parquet on Storage Accounts, so you would instinctively think the compression rates achieved (which determines cost) would be the same. I really doubt it.

I set up a continuous export of ADX data to Storage some time back and was shocked at how quickly the costs escalated. The reason was that frequent streaming of small Parquet files into a Storage Account negates the optimal compression of data managed within ADX. It turned out to be much cheaper to have secondary clusters for ADX data backup than exporting Parquet files on a Storage Account as archive.

I'm sure that the direct streaming of log data to the Sentinel Data Lake Storage Account will quickly start to surpass the costs of running ADX in Enterprise Environments.

5. Capability Enhancements - Modern Security Operations needs to be a Big Data Analytics team. It needs to be correlating and graphing data from multiple sources. It needs to be comfortable with the creation of Materialised Views and managing schemas. With centralised, structured data, Security can get rid of 2/3rds of the expensive and archaic security tooling it typically pays for and can start to adopt the advanced data capabilities that are built into ADX. If you haven't seen it, take a look at how a serious SOC can use ADX and telemetry with Graphistry: https://www.youtube.com/watch?v=1ylScDF3O_A

6. Native Machine Learning Integration - Structured data within ADX is already available for Machine Learning with the Spark connector for Azure Data Explorer: https://learn.microsoft.com/en-us/azure/data-explorer/spark-connector

Planning for Machine Learning is another requirement of M-21-31 already catered for out-of-the-box with ADX.

7. Multiple Domain Portals - When Enterprise data is centralised, structured, complete and contains event and performance data from all systems, it becomes a business asset that multiple teams need to be able to query and report against without compromising Security's confidentiality needs.

ADX supports separate teams having separate databases streaming from the same Data Connectors. Network Teams can use Network Data, Technology Teams, Patching Teams, Application Teams, Red Teams, Blue Teams can all have their own segmented view of real-time data with enforced Layered Security Controls while Security gets access to the lot. There's a high chance your cloud technology teams are already using Azure FinOps (which is ADX) already!

But What About Fabric?

Microsoft Fabric should frighten Security Architects. Most of us are bruised from the lack of security controls with the introduction of HD Insights and the PowerApps Platform. The guiding understanding that all Security controls will fail in their lifetime led Big Data platform architecture for a decade.

Fabric's promise is to bypass the layers of zonal controls and essentially creates a flat data environment – exactly what Data Engineers have been asking for.

We have designed Big Data stacks with layered security controls and Defence regulations so that misconfiguration and authorisation failures could not expose data. The days of those architectures are numbered.

The vast majority of Microsoft's customers probably don't care about the thousands of hours of effort that went into devising Layered Defence with Big Data systems nor the principles of log externalization either. The promise of unleashing AI on all Enterprise data will be too tempting within most organisations for Security concerns to make a difference.

Fabric is already here and its emergence as a core capability in all major firms is probably unstoppable at this point.

Even if Security in regulated environments aren't prepared to pour security data into this enormous Data Lake, it's only a matter of time before it becomes an accepted practice.

However, the often-used line that "the Sentinel Data Lake is built upon Fabric Technology" is somewhat meaningless. Fabric can integrate with any Storage Accounts, but it can also integrate with Event Hubs and existing ADX clusters anyway. Security staff can already volunteer to allow specific tables in ADX to be shared in Fabric. We may even set up a dual Fabric variant of ADX (the Fabric EventHouse) from the same Event Hubs supplying the PaaS ADX cluster.

There is nothing about continuing to use Azure Data Explorer today that prevents a cautious adoption of Fabric and a considered socialisation of security data when Security stakeholders are ready.

- Log in to post comments